Phased Approach to Data Warehouse Modernization

This article will help you plan the migration of your data warehouse loads into a new database or a cloud-based data warehouse.

Join the DZone community and get the full member experience.

Join For FreeA modernized database will help you focus on building innovative solutions rather than investing your time and effort in managing these legacy systems.

Based on the scale of your existing data warehouse processes or jobs, it can be an enormous task to modernize. Choosing a phased approach to this migration can mitigate any risks early on and can ensure a smooth journey overall.

What Does It Really Take?

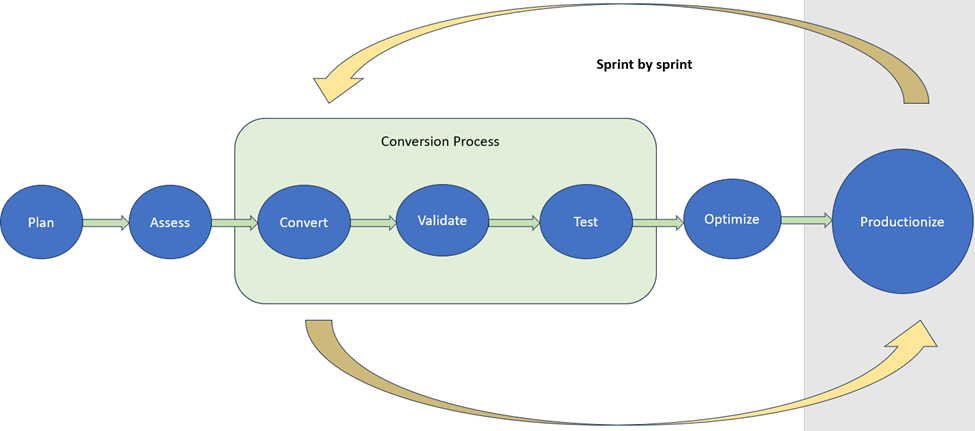

Here is what a phased approach to the modernization journey looks like.

Planning

Planning is the key to success. Understanding the scale and efforts involved are key to successful planning. Always consider re-planning based on the initial learnings. Prioritize your workloads and draw out the sprints covering all the necessary systems to be converted starting with the low-complexity ones. Training is a critical milestone in planning and each SME getting trained in their corresponding area early on is a major driving factor to the rest of the program.

Assessment

A comprehensive assessment must be done to identify the use cases or patterns. Based on the new platform, understand the gaps and come up with a metric and scale to achieve the use case or pattern. Efforts for conversion can be based on these metrics and the scale of the metric. The deeper this analysis can be, the more realistic the effort will be and the closer you will get to a smooth transition.

Conversion

Code conversion is the key and the gaps identified should feed into this conversion process. This information should be available for the hands-on developers so that they can use this for reconciliation processes. Starting the conversion with a low-risk process which are less complex and covering at least as many use cases or patterns as possible can feed into any re-planning/reassessment needed. Expect the first couple of sprints to be slow and it is okay to spend some time here as this is extremely critical.

Validation

Build automated validation processes that cover metadata validation and reconciliation reports of the metadata for the entire sprint in bulk. Have a well-defined checklist of items for validation by each process and to the most granular level. For any differences found in this validation process, build an automated way of fixing the conversion issues in bulk.

Testing

Unit testing and data validation are the most critical areas for any conversion process. It can be challenging if the right attention and time are not given at this stage. Ensuring test data completeness and readiness is the key to success. Have well-defined data validation strategies like parallel environment testing, snapshot method, or a mix of both based on your situation and data availability within test environments.

Optimize

Once you have your well-tested code, focus on optimizing this code. Conversions may always leave at possible optimizations that are exclusive to the new systems. Plan with enough time and resources ahead of time for these optimizations. Although you may be possibly meeting your SLAs look out for optimizations around best performance, scalability, cost, and maintainability to achieve your end goal.

Productionize

Like any migration project, various aspects need to be planned out for the productionizing and cutover of loads. While bringing over the new code from lower environments, retaining surrogate key values and any other sequence generator values is critical. Since it is going to be a phased approach there will be times when you are running in a hybrid mode with existing processes and new converted processes. Establishing the dependencies between these in the right order and with minimal impact is very critical.

Closing Thoughts

Whatever endpoints or platforms you may choose taking this phased approach will help you with a smooth transition to your modernized world.

Opinions expressed by DZone contributors are their own.

Comments